Out of Sight, Out of Mind: Can Video World Models Simulate A World Beyond the Pixel Frame?

Events in the physical world, such as water being poured into a glass or ice melting on a hot surface, unfold independently of our observation. For instance, these processes continue when we close our eyes or look away. Today’s video world models “simulate” the world by generating pixel frame observations. But can they continue to simulate the world when observations are unavailable?

To answer this question, we probe video world models by introducing “observation control”, which interrupts the pixel-frame observation of the evolving object(s) for some duration, such as occlusion, illumination dimming, or camera lookaway, as shown in the videos below. A proper world model, we believe, should be able to successfully evolve states despite the interruption in pixel-level observation – in other words, “decouple” observation from state evolution.

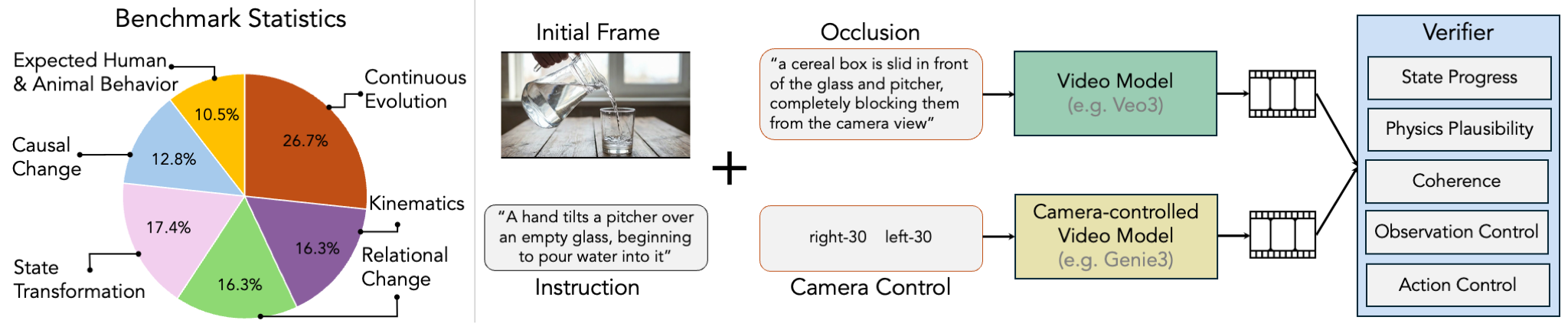

Introducing STEVO-Bench

STEVO-Bench is a benchmark designed to probe whether existing closed- and open-source video models and camera-controlled video models can decouple observation with state evolution. STEVO-Bench consists of 225 unique tasks, spanning 6 different categories of critical naturally-occurring evolutions in the physical world, including continuous process, kinematics, relational change, causal change, state transformation, and expected human/animal behavior. The tasks reflect physical events that real-world agents routinely observe: a hand turns on a stove burner and a wet pan begins to heat, a pitcher pours water into a glass, a wall switch is toggled and a lamp turns on, a tire pump inflates a deflated tire, a railroad crossing gate descends as a train approaches, or a hand turns a manual valve and a sprinkler activates. The diversity of tasks and their grounding in everyday physical interactions make STEVO-Bench directly relevant to the challenges faced by embodied agents deployed in the real world.

Understanding and quantifying how models fail

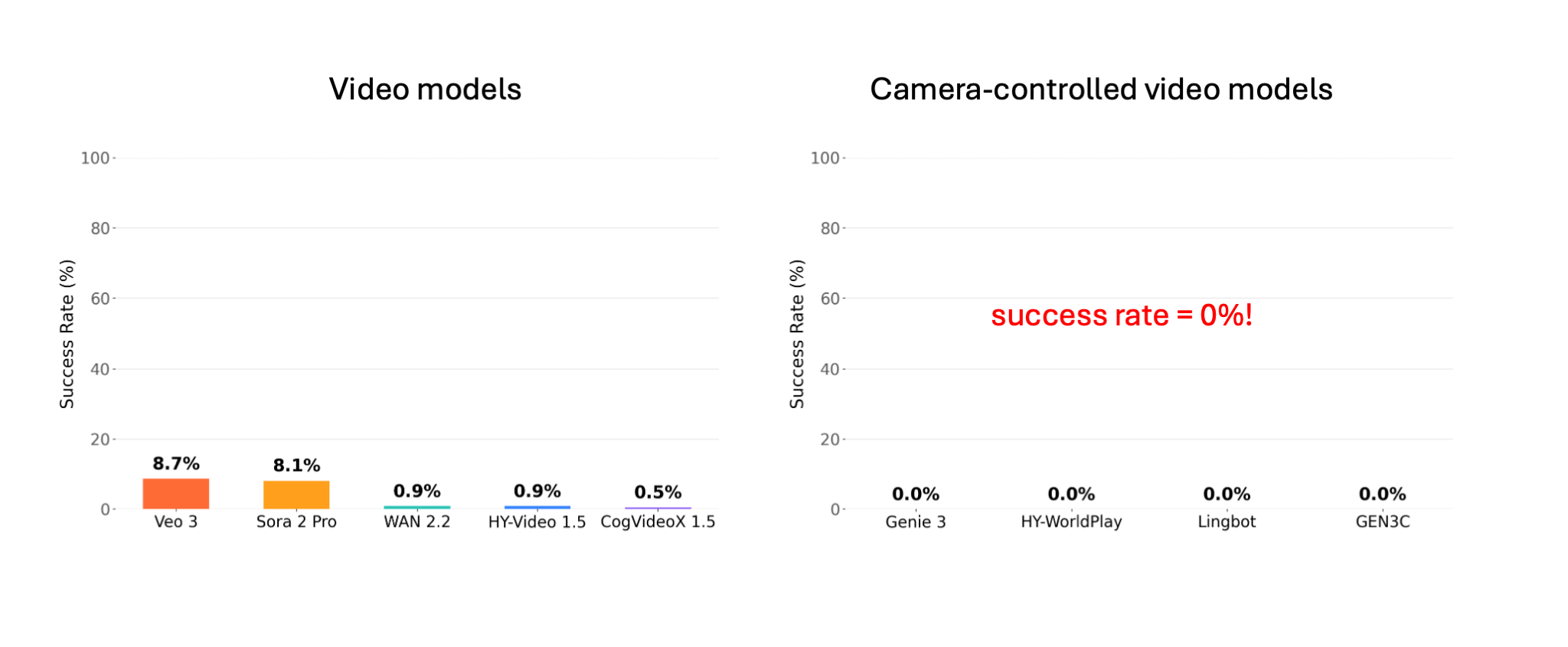

Perhaps unsurprisingly, these models largely fail to evolve the state as expected. The figure below shows the (very low) success rate of image-/text-to-video models (like Veo 3) and camera-controlled video models (like Genie 3). Both video generation models and camera-controlled video models struggle. In particular, camera-controlled models show an overall 0% success rate due to their strong bias toward static scenes.

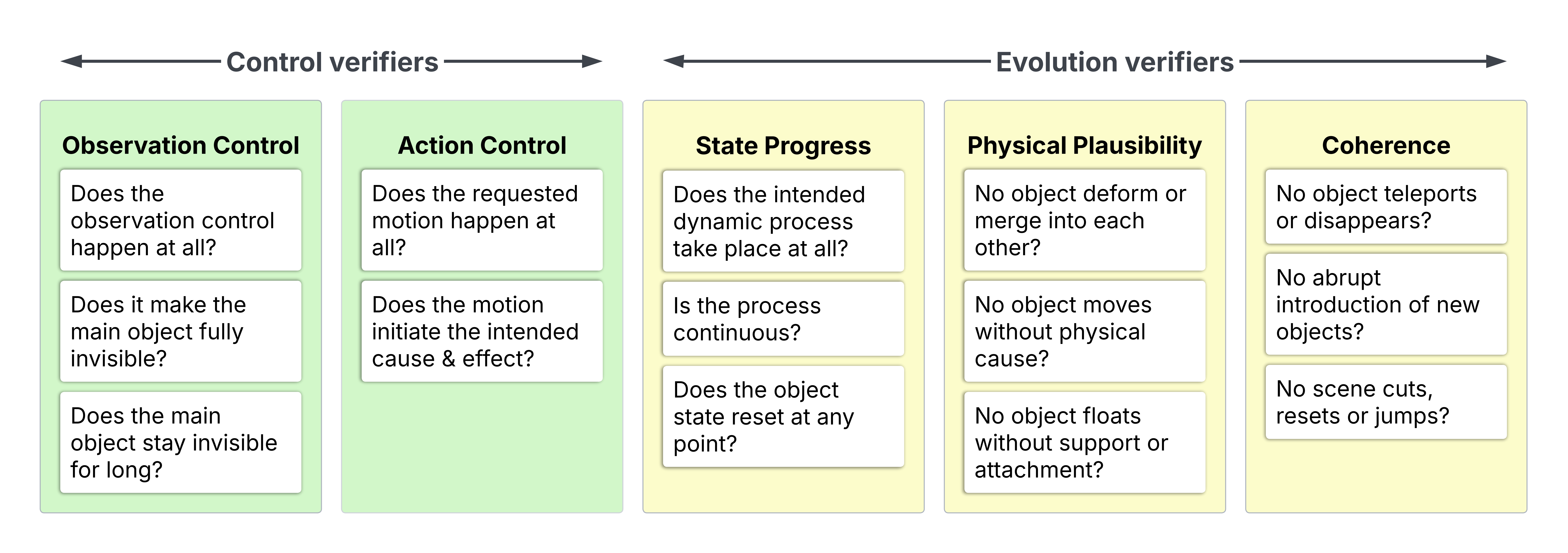

“Did the state evolve correctly” may sound like a simple question, but it has a few implicit dimensions.

- Does the state progress in the correct qualitative direction (e.g. if water is poured into a cup, the water level grows higher), regardless of artifacts? Does the process obey physics (e.g. no unnecessary deformation, bending, anti-gravity)?

- Is the scene coherent in all other aspects (no object disappearing/reappearing/teleporting/changing appearance)?

- Additionally, we also need to ensure the observation control such as occlusion or camera lookaway is correctly applied, as well as the action control which kickstarts the state evolutions (e.g., “a scissor cuts a rope”).

To quantify these various aspects, we build automatic VLM-based verifiers for each dimension, as detailed in Figure 3. In order to correctly evaluate evolution success under observation control, we only calculate state progress, physics plausibility and coherence on examples that correctly obey both action and observation control. To reduce control violations, we use the controls that are easiest for each model type: occlusion or “light off” for video models, and camera lookaway for camera-controlled models. More analysis can be found in our paper.

Video models: evolution stopping and incoherence

Two key failure modes we observe for video models are:

- evolution stops when the observation control (occlusion/illumination dimming) is introduced; incoherence before and after the observation control.

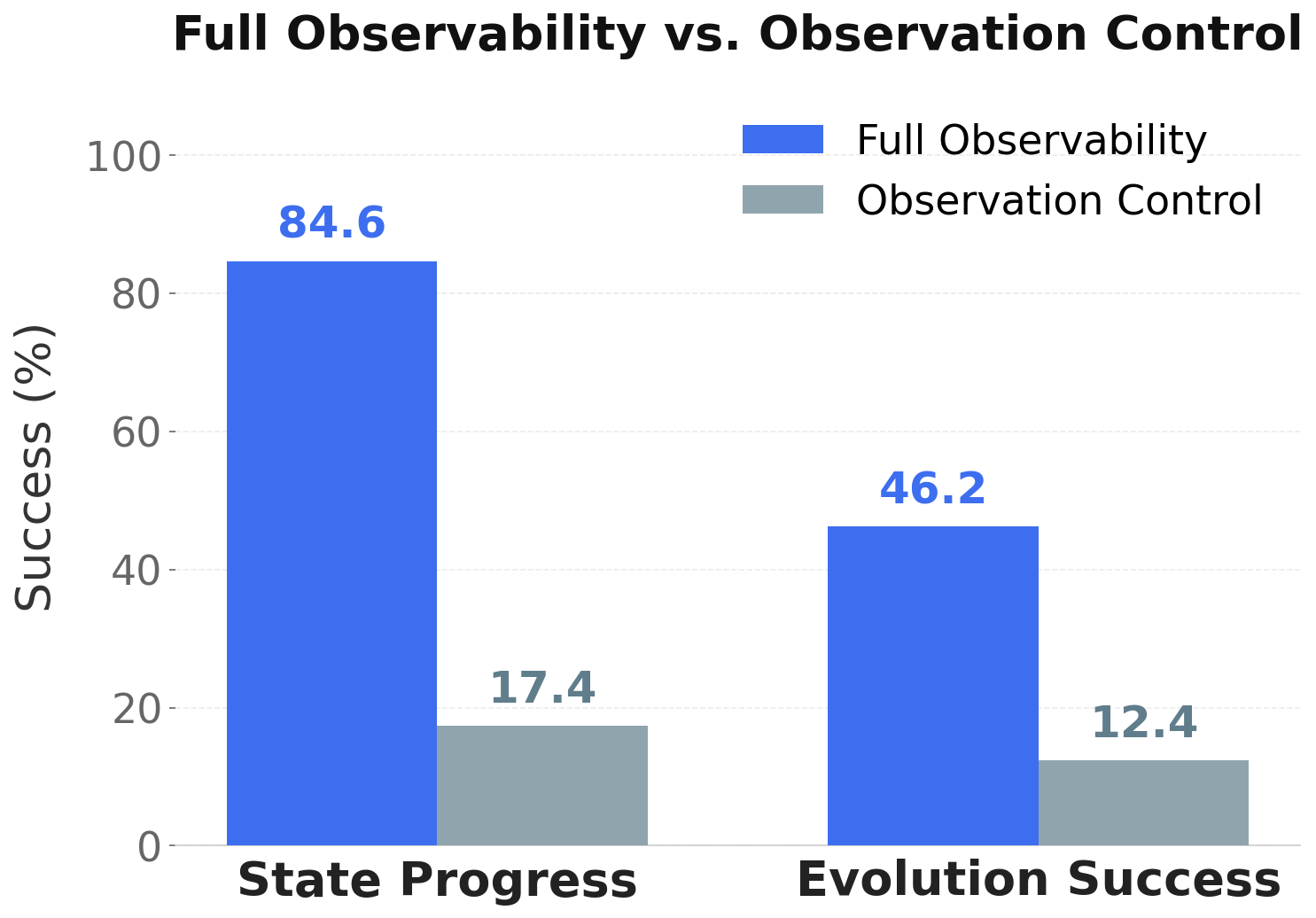

- To fully understand the effect of observation control, we also run the same state evolution experiments under full observability as comparison.

The videos above show two examples representative of each failure mode: the “light off” control stops the mattress from deflating, whereas without “light off”, the mattress can correctly deflate. Similarly, while the sponge correctly becomes wet and darker when water pours onto it under full observability, when the cardboard occlusion is introduced, the rectangular sponge turns round.

In general, when observation control is introduced, we observe a steep decrease in the overall state evolution success rate, as well as the state progress rate (which measures whether the state progresses at all, despite physical inaccuracies or artifacts). The figure below shows the contrast between the observation-controlled and the full-observability setting.

Camera-controlled models: strong bias toward “freezing” the scene

Camera-controlled models, when instructed to “look away” from an evolution process, largely fail to evolve the process, and tend to keep the scene static as the camera moves. We observe similar behavior across models, especially open-source models like Hunyuan-WorldPlay, Lingbot, AETHER, and GEN3C.

The contrapositive: dynamics makes camera control hard

While the previous failures of camera models show that when the camera turns, the scene “freezes”, we observe the contrapositive to be true as well: in the occasions where dynamics does happen in camera-controlled models, camera control becomes hard. Genie and Lingbot are the only models that evolve the state sometimes. For both models, we observe that while evolution is happening, it is difficult to simultaneously shift the view.

We hypothesize that this inability to simultaneously enable camera control and state evolution might be attributed to the training data. These models usually train on a combination of general video data, rendered 3D data, and gaming data.

- In order to obtain diverse camera trajectories, camera-controlled models train on rendered videos of static scenes. For example, WorldPlay trains on renderings of reconstructed 3D Gaussian Splats, and LingBot trains on scene renderings from Unreal Engine. These videos move the camera but do not contain dynamics, which might reinforce the static bias, and furthermore associate complex camera movement with static scenes.

- The general video datasets that models train on, e.g., Sekai and DL3DV, mainly contain indoor and outdoor scenes with many static objects.

- The gaming data, which contain (action, video) pairs, usually don’t contain rich, natural physical evolutions that occur in the real world.

In a sense, the capability to “decouple” observation with state evolution means the model, despite the outcome format, internally understands the dynamics in 4D (i.e. dynamic, and allowing view change). However, we do not have such “4D ground truth” in large scale in the pretraining data. It remains to be seen whether such “4D understanding” can be purely emergent with more scale of existing data types, or call for changes in architecture, representation, or training strategy.

Memory modules are not enough - they reinforce the static bias

There has been increasing attention in memory-enhanced video generation, like WorldMem, Spatia and LSM because they allow for an explicit “persistent state” that is not tied to the pixel generations. However, truly simulating a world beyond the frame requires more than verbatim memorization of previously-seen scenes. While some memory models are constrained to specialized domains such as Minecraft or RealEstate10k, we evaluate VMem, a relatively general model trained on indoor and outdoor scenes.

As shown in the video above, VMem enables faithful memorization of the initial scene, but fails to evolve the scene (the ball does not roll down). This highlights that verbatim memorization does not help video world models in “simulating” the world beyond the frame. STEVO-Bench calls for future architecture designs to move beyond static scene consistency towards a true world model that can model evolutions, decoupled from observation.

Where do we go from here?

STEVO-Bench highlights critical limitations of current video-based world models in modeling state evolution. This is not merely an intellectual investigation. If we want our world models to generate larger worlds and enable longer-horizon interactions, the visible frame observation for any given moment becomes an increasingly small fraction of the generated world. In other words, the majority of the generated world is unobserved. Given this, it becomes critical that the world models can continue evolving the world state, decoupled from observation.

Findings from STEVO-Bench and its verifiers on various axes expose potential fundamental limitations in data and architecture, such as the lack of 4D-aware data (with both dynamics and view control), and the static-scene bias of memory architectures. We hope STEVO-Bench can inspire discussions and new ideas toward world models that can correctly simulate the world beyond the pixel frame. As we work on a solution, we wanted to share our benchmark and early thoughts in this blog to start some discussions and hear what you think!

Enjoy Reading This Article?

Here are some more articles you might like to read next: